Words are hard - why natural language is a bottleneck for interaction

Director of Innovation

Everywhere you look you’ll find text prompts to talk to machines. The boom is thanks to large language models and their ability to respond to human language with something useful. But, in many circumstances, it isn’t a good interaction pattern.

Everyone brings baggage with them to Innovation. It’s very rare to meet an innovation professional who’s spent their entire career in Innovation since it’s such an ill-formed, and quite new, discipline. My baggage is interaction design and the way folks are imagining future human-machine-interactions is making my toes curl.

Text as a universal interface

There’s been a buzz on Twitter - and other networks - about the possibilities that large language models offer interaction design. The fact that large language models are so good at parsing human words is getting everybody very excited about how that could be the universal interface for interactions.

By way of example there’s Reid Hoffman, co-founder of LinkedIn, with ‘Language is the universal interface’, or Sucks saying, ‘ordering food via whatsapp has made me realize how insanely stupid it is we make everyone learn our ridiculous app interface or whatever’ and Y-Combinator alumni Garry Tan saying that large language models as a UX will be a revolution. Meanwhile startups like Adept are building SaaS businesses around it.

Over on HN a post by Scale got a lot traction building on Douglas McIlroy’s idea, talking about UNIX philosophy, that, “programs [should] handle text, because that is a universal interface.”

Leaving aside the fact all these suggestions would require giving LLMs access to tools, and based on our article last week that would be a very bad thing. It also requires us to forget that UNIX was supplanted by graphical user interfaces because humans can interact much more easily with machines via symbols and images than they can through words.

What you can learn from smart speakers

In 2016 I worked at an agency where our main client was Google. We were doing a lot of experimentation with smart speakers, natural language processing and understanding user intent. Down the pub one Friday I grandly said my future would be in Voice Interaction Design. Reader, it was not. Once we all got smart speakers into our home a few things happened:

- They felt slightly creepy

- All any of us could remember was “Hey, play Spotify…”

- They started collecting dust

At Dyson I was involved with some of the interaction design work looking at how products could be controlled by smart speakers or phone assistants. The results of our research was an eye opener with the highest failure rate I’ve ever encountered in any user research I’ve done. Using language as an interface made people uncomfortable and they failed over and over and over again.

Why words are hard

Everyone’s favourite behavioural psychologist Daniel Kahneman introduced the concept of system 1 and system 2 thinking in his 2011 book Thinking, Fast and Slow. System 1 runs automatically, system 2 requires active thought.

Humans are always trying to conserve energy so we like to stay in system 1 - on auto-pilot - as much as possible. Thinking is hard and words require thinking, especially if those words need to coherently express intent.

The complexity of interactions we use machines for makes this worse. Let’s consider an example that’s popular with VC-types: booking flights. A simple example would be:

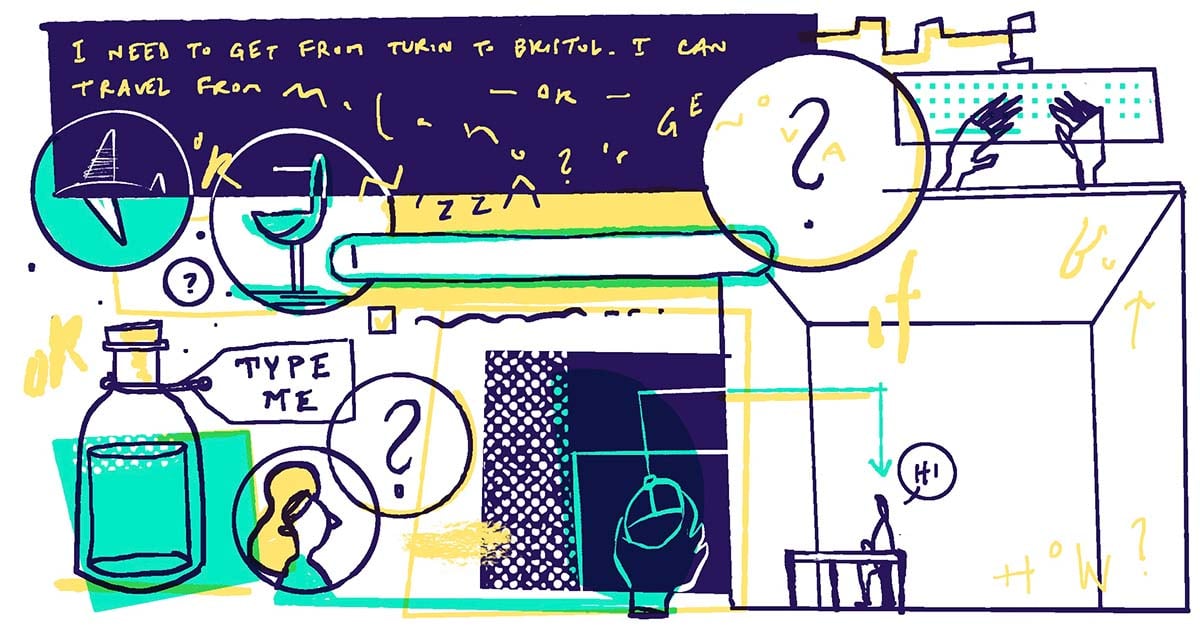

Find flights from SF to LA at 9am on Sunday with a return for 11pm on Wednesday.The real-world for most is more complex. I live about an hour south of Turin in Italy, but lived for years in Bristol, UK. Travelling between these two secondary cities is not easy. There’s loads of trade-offs and human language is a sub-optimal way to try and express that to a machine.

A simplified version of that interaction might be:

I need to travel between Turin and Bristol. I can hypothetically travel from any of the Milan airports, Lyon, Nice or Genoa. I’ll fly to Heathrow but none of the other London airports. I don’t want to fly to Cardiff or Birmingham. I need to leave on Tuesday after I’ve dropped the kids at school and be back for Friday before the kids get out of school. I’d like some way to assuage my guilt for destroying the planet by flying. K. Thanks.That’s hard for me to write and I don’t have high hopes a machine would be able to handle that request.

Don’t make me think (or worry)

Steve Krug wrote Don’t make me think way back in 2000. His book builds on John Sweller’s idea of cognitive load where tasks become harder as more of our working memory is used up. As Edward Gibson demonstrated in his pithily titled paper Dependency Locality Theory that our working memory is filled up very quickly when thinking in sentences.

Ambiguity, or lack of clarity, within the interface makes that worse. If we’re uncertain about the context we’re in or the response we’re going to receive we need to think more. Jakob Nielsen top heuristic for designing user centred products is to always make the system status visible. That’s very hard to do with a text interface. In fact, it’s reasonable to say that, of Nielsen’s heuristics, only 2 of 10 would fit a text interface.

Within any human-machine interaction the state of the person is also critical. Last week Chayn published an incredible deep dive into why they took down their chatbot in 2020 because of how difficult people in crisis found the interaction.

All this to say, it might be better to think like Paul Fitts and give folks a really big button to hit!

Pick a flamingo, any flamingo

For my article about embodying large language models I put together Choose Shapes Obliquely, which is a silly app giving you the opportunity to create an SVG shape.

The motivation for the app was to demonstrate that parsing human language of limited complexity doesn’t require a large language model. In the words of Sasha Luccioni, from climate lead at HuggingFace, for simple tasks like this, “[using an LLM is] like using a microscope to hammer in a nail - it might do the job but that’s not really what this tool is meant for.”

As I built it though I realised that natural language was a terrible way to select a shape. With the only input being a text box it’s impossible to know whether a shape exists. Even with some clues - like examples of working prompts - to know what to put in it’s a frustrating experience.

As innovators, designers and creators we need to tread carefully with applications we’re building. If we’re not careful there’s a risk we end up in a world where we’re surrounded by text inputs and human-machine-interactions have been taken back to the 1980s.

"

"

"

"